Nick Azar, Jack Gomberg, & Antonia Sciarrota

Remember back in October, when everyone was still in that “wow, I can’t believe we’re actually Freshmen/Seniors/etc” phase? Somewhere during that time period we took this massive health survey. Frankly, I don’t remember doing it, but I’m sure some of you have seen the posters hanging around the front desk and the deans’ offices that show off the results.

These posters sparked some interesting debates, none of which seemed to be centered on the actual information at hand. Students who stopped to read the posters didn’t seem emboldened by the claims shown, but rather refuted them outright. Almost every student denied the validity of the messages, saying everything from, “That’s ridiculous, it’s way more than that,” to “Oh, way to insert ‘Freshmen’ in tiny text like a medical side effect.” The topic made its way to our AP Stat class. We decided to test the claims made by the posters by collecting our own random sample of data and then using what we had learned in the class to estimate how likely the original data was to be true.

Our group decided to test the poster that claimed “Only 80% of Latin High Schoolers have ever cheated on a test/quiz.” Using that claim as our null hypothesis, we set out on our journey to become “those annoying seniors who bug you to take their Stat survey” and hopefully prove something about our school’s moral code.

First, we constructed our survey. The original survey asked if students had cheated on a test or quiz; we thought that this wording presented possible bias. The word “cheat” is extremely negative and most likely prompts feelings of guilt or shame associated with admitting to doing such a thing. Instead, our survey asked if the student had knowingly received help that they should not have. We specifically tried not to say the word “cheat” to avoid the possible repercussions of including such a buzz word in our question. Furthermore, the original survey question left out other assignments such as projects and essays. We formatted our survey as a Y/N response asking if they cheated on a quiz, test, essay, and project individually.

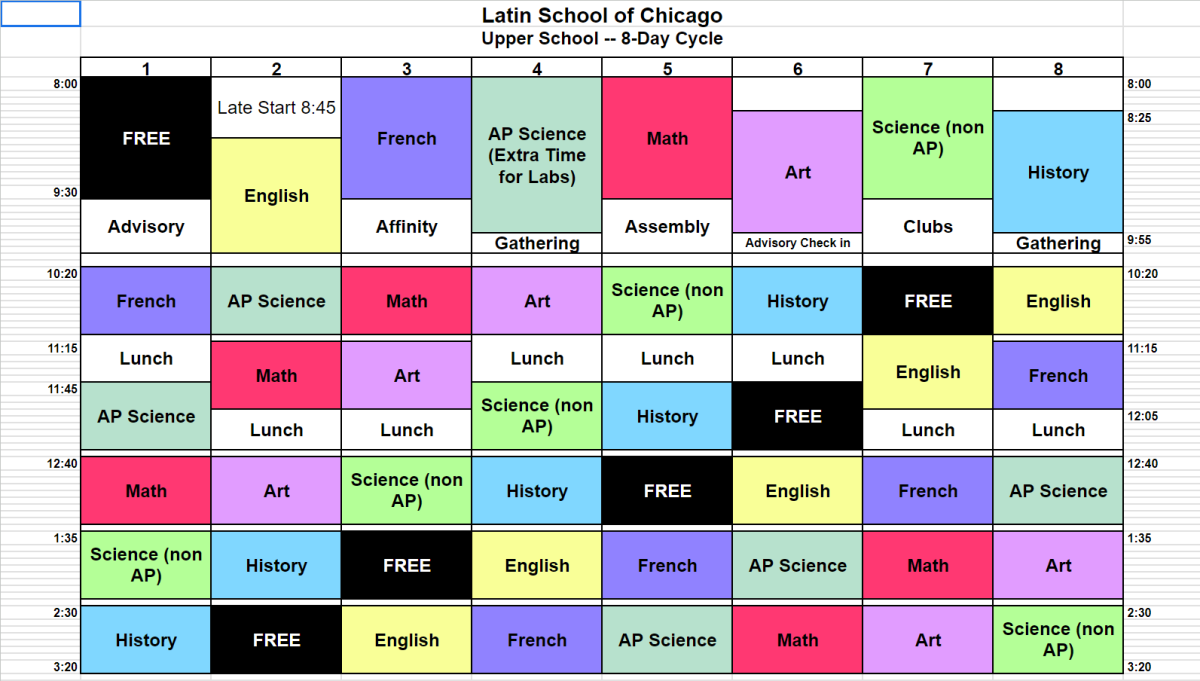

We randomly selected four different advisories to participate in our survey. We got 32 responses total, a decent number to draw sample data from. We sorted out the data and wound up with this:

As you can tell, students seemed to cheat most on quizzes. Additionally, essays were the second most cheated on assignment, right before test. While this is interesting, it doesn’t help us question the findings of the Healthy Survey. For that, we need to see how many people cheated on a test or quiz.

40.6% percent of students who took our survey said that they had received help that they know they shouldn’t have on a test or quiz. That’s a little more than double the claim made by the posters. However, this was a statistics project and you can’t support a claim by saying “it’s a lot different than they said.” We needed some statistical analysis.After some calculations, we found that the probability of getting a sample like ours given the original data is valid is about 0.18%, a very small probability! In fact, this is so unlikely, that we were able to reject the claim made by the Health Survey. Because of this statistical significance, we have reasonable doubt about the 20% cheating statistic from the health survey.

However, there are a number of confounding variables in how the health survey was set up as well as how our own survey was set up. The health survey was conducted in October, while our survey was conducted in May. It is possible that the amount of people who have cheated really had doubled during that allotted time. Additionally, we can’t see the separation between grades in our data. While we did stratify our sampling, we did not collect our data in a way that let us distinguish between grades, nor did the health survey release data in such a manner. Therefore, we can’t draw any conclusions on grades, or be certain that grade levels aren’t a confounding variable. Finally, we sampled by advisory, which tend to be self-selecting. While this did help us to organize and collect sample data easier, this method wasn’t the best if we were looking for a purely individual, random sample of each grade. Our data did show, however, that there was something significantly different in what the posters said and what was shown in our own data. This could point to a way to improve how the school collects data on such a touchy subject, or imply that the time of year the data is taken could yield extremely different results. However, without further study, we can’t be certain. ]]>