Margo Williams

Co-Editor-in-Chief

Meet your New Governors.

Dave Willner, who spearheaded the initiative to establish online norms at Facebook, said “Because of the volume of decisions — many millions per day — the approach [to online censorship] is ‘more utilitarian than we are used to in our justice system…It’s fundamentally not rights-oriented.’”

Hasan Minhaj featured another content moderator on his Netflix show called Patriot Act. She “goes through over 2,000 posts an hour… [and has] 1.6 seconds to decide if a post is violent, pronographic, or doesn’t adhere to community guidelines.” There is so much content being posted online every minute of every day, and censorship of that content is based on rules that have little flexibility. There’s no time to consider context.

As a Junior last year, I took Mr. Greer’s Modern China sophomore history course, and at the end of the year, I approached him about doing an ISP together. He agreed, and we decided to study Constitutional law and delve into trailblazing privacy cases. We began to talk about how social media companies view and regulate their online content. What we found is that social media corporations can take down whatever content they want, and they don’t need to provide a cause. Technically, you could post a picture of your puppy on Instagram and a social media company could delete it without cause. On almost all platforms you would not be able to appeal their decision.

On November 28th, Mr. Greer and I skyped the author of two articles we read for our ISP. Kate Klonick is an assistant professor at St. John’s University Law School and a PhD from Yale Law. Her articles cover how social media regulation and online censorship affect freedom of expression.

Klonick is the trailblazer in her field. Aside from one law professor at American University, her article “The New Governors: The People, Rules, and Processes Governing Online Speech,” is the first piece of academic writing to delve into the internal mechanisms of social media corporations’ online content moderation systems.

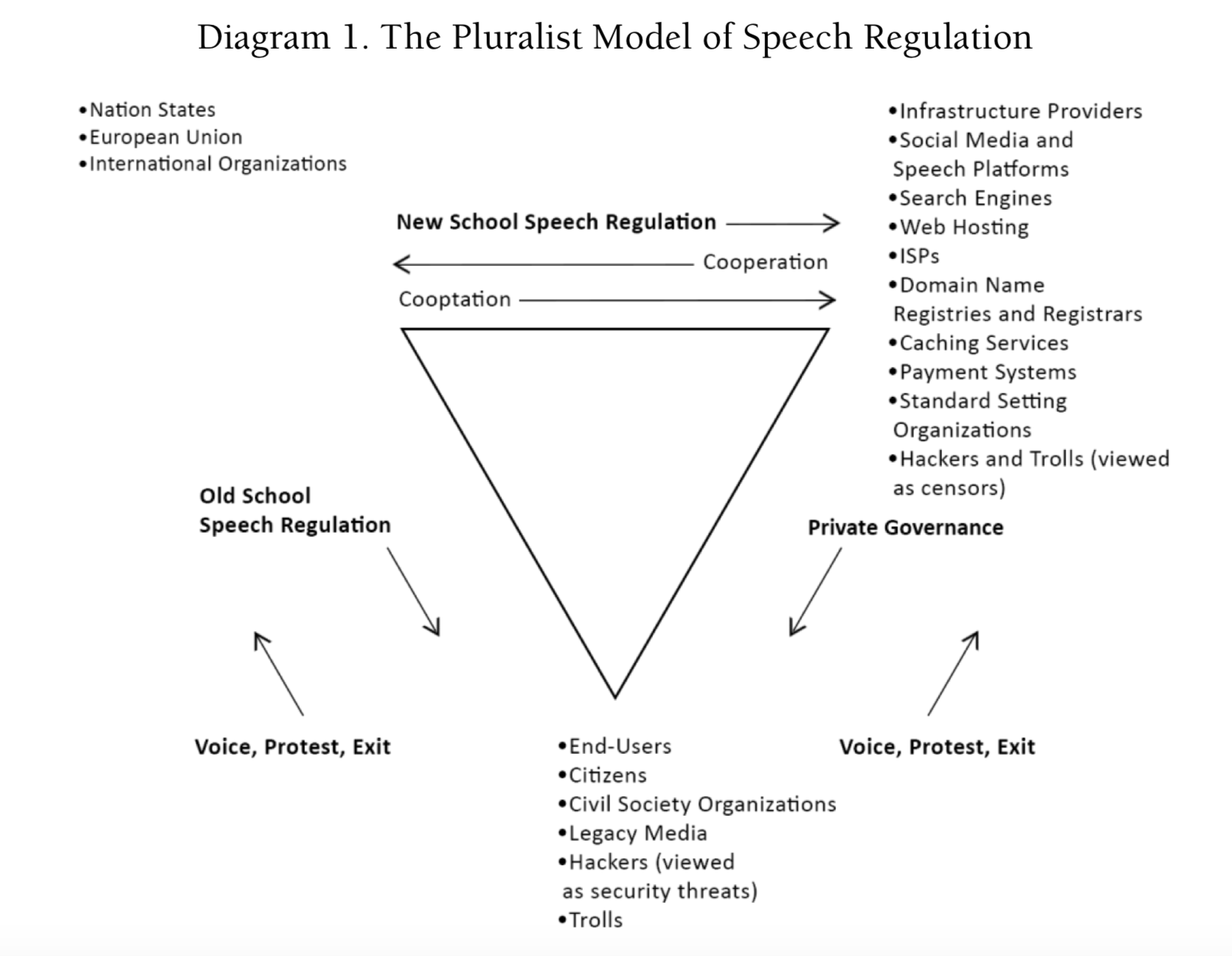

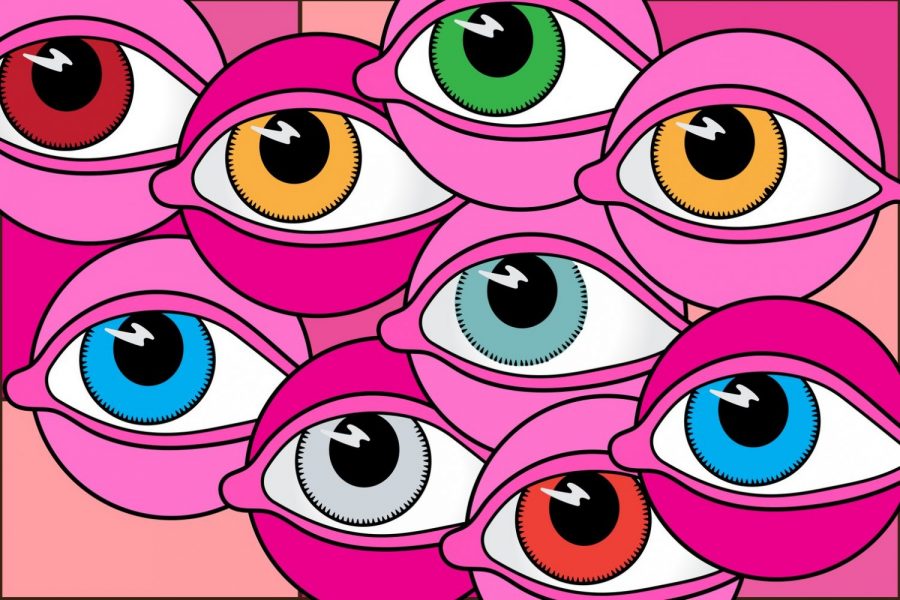

The triangle diagram below is taken from an article by Jack Balkin, a professor of Constitutional Law and the First Amendment at Yale Law School, and a close coworker with Professor Klonick. It outlines three primary players: 1) international organizations, 2) citizens/users, and 3) new, private governors (most often social media and speech platforms.)

“It’s not that regulation [of online content] would be bad necessarily” Professor Klonick said, “it’s just that it has to be really careful and well thought out.” While it seems logical to ask the government to regulate these big companies, doing so would “butt up against First Amendment concerns,” and the government simply isn’t interested in taking on the liability of being involved, according to Klonick. The question, instead, is “how do you incentivize the private companies to… legitimiz[e]… the democratic role that they’re playing in speech?” The end goal should be to “get them to reflect our norms instead of it being this oligarchy of private companies making these decisions for us.”

Social media companies gain value as more and more people use their platforms, because the point is to connect with more people. This phenomenon is called ‘the network effect,’ and it makes social media platforms particularly susceptible to dominating the social media industry in the form of monopolies. Professor Klonick said the only feasible way to remove these monopolies as of now is “to start basically breaking up functionalities.” You’d have to split apart the functions—like instant messaging and making private groups—on Facebook. The concern, then, is that Facebook wouldn’t first figure out which functionality is actually drawing users. When AOL was sued under antitrust claims, the company broke off its instant messenger function, AIM, before figuring out that users were primarily using AOL just to access AIM. Prior to hearing this story last week, I have only ever thought of AOL as the platform my grandmother’s email is on. Needless to say, AOL didn’t fare well.

How, then, do we hold social media companies accountable for the functionalities they do have, regardless of whether they’re a monopoly or not? Klonick and her fellow academics Jack Balkin and Jonathan Zittrain have an answer: information fiduciaries. It sounds confusing, but it’s not. Examples of fiduciaries are money managers, lawyers, and board members. They’re people who are legally and morally expected to act in the best interest of their client. According to Klonick, they have “a special relationship that is outside the bounds of the First Amendment,” which means there is information in their possession that they’re not allowed to share. Klonick and her peers are working with legislatures and Congress to draft legislation that would give these platforms fiduciary status. “It’s that it’s not just that fiduciaries [are] a nice concept because… this… sense of trust—although I think that sells it at the end of the day—but what makes it really smart” Klonick said, “is that it gets around this First Amendment issue that will inevitably be raised if almost any other type of regulation is attempted.”

Admittedly, the concept of information fiduciaries is a bit abstract. Where existing fiduciaries have legal parameters, information fiduciaries are dependent on trust and good faith, not law. There is “less accountability because all this stuff [is] happening in the shadow of the law, and it’s not clear whether the law would create more accountability, or if you have to work within that shadow to create that accountability. And that’s still the question that has to be answered.”]]>

“It’s not that regulation [of online content] would be bad necessarily” Professor Klonick said, “it’s just that it has to be really careful and well thought out.” While it seems logical to ask the government to regulate these big companies, doing so would “butt up against First Amendment concerns,” and the government simply isn’t interested in taking on the liability of being involved, according to Klonick. The question, instead, is “how do you incentivize the private companies to… legitimiz[e]… the democratic role that they’re playing in speech?” The end goal should be to “get them to reflect our norms instead of it being this oligarchy of private companies making these decisions for us.”

Social media companies gain value as more and more people use their platforms, because the point is to connect with more people. This phenomenon is called ‘the network effect,’ and it makes social media platforms particularly susceptible to dominating the social media industry in the form of monopolies. Professor Klonick said the only feasible way to remove these monopolies as of now is “to start basically breaking up functionalities.” You’d have to split apart the functions—like instant messaging and making private groups—on Facebook. The concern, then, is that Facebook wouldn’t first figure out which functionality is actually drawing users. When AOL was sued under antitrust claims, the company broke off its instant messenger function, AIM, before figuring out that users were primarily using AOL just to access AIM. Prior to hearing this story last week, I have only ever thought of AOL as the platform my grandmother’s email is on. Needless to say, AOL didn’t fare well.

How, then, do we hold social media companies accountable for the functionalities they do have, regardless of whether they’re a monopoly or not? Klonick and her fellow academics Jack Balkin and Jonathan Zittrain have an answer: information fiduciaries. It sounds confusing, but it’s not. Examples of fiduciaries are money managers, lawyers, and board members. They’re people who are legally and morally expected to act in the best interest of their client. According to Klonick, they have “a special relationship that is outside the bounds of the First Amendment,” which means there is information in their possession that they’re not allowed to share. Klonick and her peers are working with legislatures and Congress to draft legislation that would give these platforms fiduciary status. “It’s that it’s not just that fiduciaries [are] a nice concept because… this… sense of trust—although I think that sells it at the end of the day—but what makes it really smart” Klonick said, “is that it gets around this First Amendment issue that will inevitably be raised if almost any other type of regulation is attempted.”

Admittedly, the concept of information fiduciaries is a bit abstract. Where existing fiduciaries have legal parameters, information fiduciaries are dependent on trust and good faith, not law. There is “less accountability because all this stuff [is] happening in the shadow of the law, and it’s not clear whether the law would create more accountability, or if you have to work within that shadow to create that accountability. And that’s still the question that has to be answered.”]]>

abolandh2 • Dec 13, 2018 at 6:17 pm

An extremely informative article, Margs. This ISP sounds incredible!

obaker • Dec 13, 2018 at 4:42 pm

So enlightening, Margo!

rigbokwe • Dec 13, 2018 at 4:06 pm

Very interesting article on a topic that I, personally, didn’t know anything about beforehand. Thank you for informing me about this topic and leaving us all with a thought-provoking question. 🙂 🙂